What do we actually mean by ‘Meaningful Data’ in schools?

The new Ofsted framework has again sparked debate and heated discussion, but on the whole teachers are considering it to be a step in the right direction. It focuses on delivering a meaningful curriculum and encourages the use of meaningful data when tracking and monitoring progress.

When you hear the term “Meaningful Data”, the interpretation sounds obvious – it’s data that shows you something, right? But when you stop to think about it, what does that actually mean? What is it that really makes data “meaningful”?

What’s the purpose of data?

To discuss the purpose of data in schools, we need to take a step back and look at why we collect data in the first place.

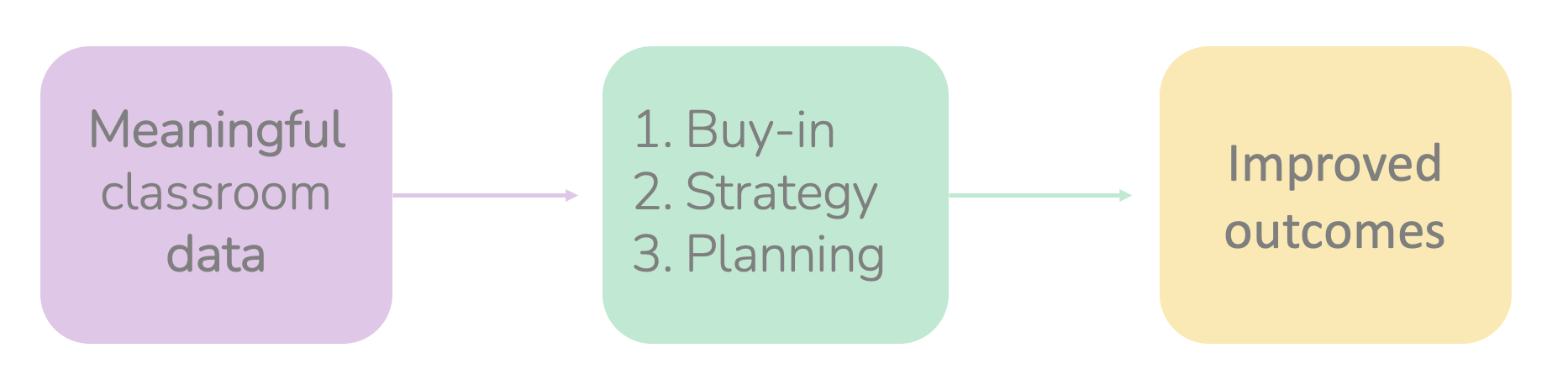

First, you need to first look at whether the data you’re gathering is actually useful. If data is useful, then analysing and interpreting it will give you the information you need to support students in making progress. In other words, the data should provide you with clear actions to improve a student’s outcomes.

In our previous lives as teachers, we’d commonly use data to get buy-in through sharing it with all key stakeholders. This improved attitude, enthusiasm and motivation from parents, students and teachers. We would also collect data to be able to see the bigger picture of student performance, which enabled us to lesson plan more effectively:

What does meaningful data actually look like?

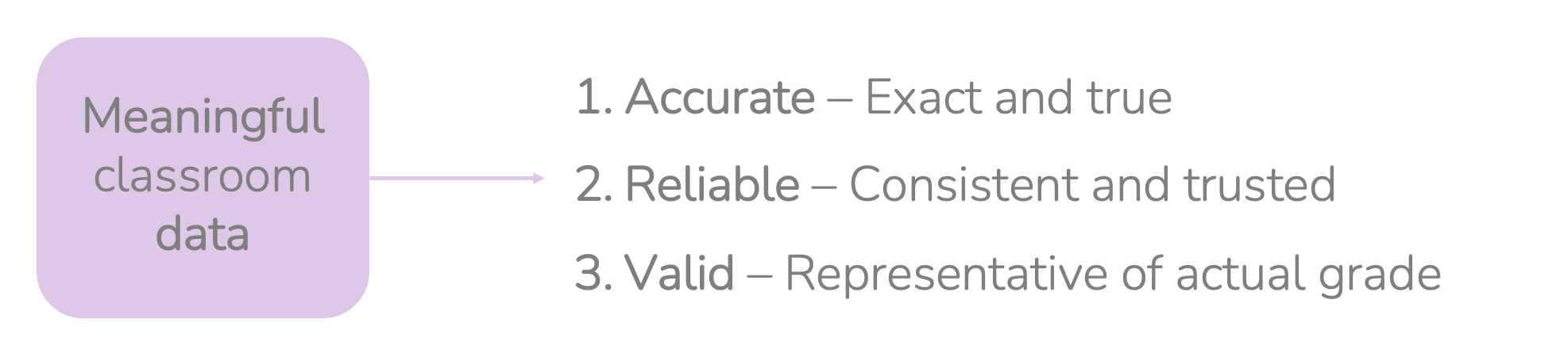

The education and training inspectorate for Wales, ESTYN, says that meaningful data needs to be ‘Accurate’, ‘Reliable’ and ‘Valid’ for data to be meaningful:

Ensuring data accuracy

I’m sure it goes without saying that the data that you’re using has to be as accurate as possible. But as with the phrase “meaningful” – what does “accurate” mean?

We’ve already identified that there are a couple of strands of data you’ll be using:

- Data that informs the progress of a skill being learned and / or knowledge being acquired

- Data that informs that overall picture of pupils’ performance across the school

Although both are inextricably linked, they have different considerations.

For data that informs the progress of skill or knowledge acquisition we need to consider the accuracy of the assessments you’re using. To give an example: if ESTYN want to see whether pupils have learned or improved on the ability to answer a 9-mark question, then the sensible approach would be to use a 9-mark question from a previous exam – one that has been levelled appropriately, tackles the necessary assessment objectives, and has a full mark scheme with exemplar commentary. You wouldn’t have a stab at writing your own questions, then have a stab at creating your own example answers and commentary, and then a rough go at what a probable mark scheme looks like, would you? This is why lots of teachers are moving to a model of quality over quantity. They aren’t chucking out multiple assessments that have no rigour, and that won’t be able to tell you exactly what it is you need to know. It’s vital to consider what that assessment you’re about to take time to create, use vital lesson time to deliver, and take time out of own schedule to mark thoroughly, is actually going to tell you. More importantly, can you trust that the data you get from it is going to be accurate?

When you’re considering data that is used to calculate the final overall or current working at grade, how do you tell whether this data is accurate? Firstly, ask yourself:

- Does the tracking system I’m using calculate student outcomes exactly as the exam board will do in the summer exam series?

- Does my tracking system take into consideration the weighting of each component and therefore the associated scaling factors?

- Am I using the very latest grade boundaries with the latest suggested potential percentage increases?

When deciding which groups you need to work with and individual pupils you need to target, it’s vital that this is based on incredibly accurate calculations: not calculations built on assumptions, or rough estimates based on how hard students work, or how good their attitude is.

Data reliability

Reliability: ‘the quality of being trustworthy or of performing consistently well.”

Reliability considers the process through which data is produced. When we say that a car is reliable, or that a person is reliable, the undertone of that is always consistency. In these examples, reliability means being able to continually deliver, continually perform, and continually repeat the same action. The same can be said for data that it’s reliable. That means it might be produced by using an assessment system that is reliable: for example a tried, tested, trusted method of assessing that provides consistent accuracy.

For example, let’s say you’re using a tried and tested piece of educational software like ours to prepare your assessments. This means there will be consistency from assessment to assessment per class, as well as consistency across each department from the teachers in that team. On the other hand, imagine that you have one teacher writing their own assessments and mark schemes, another using a platform to provide some questions, and then another using tests from a friend who works at a different school. It’s clear that in this case the overall picture of where the pupils are working at can’t be trusted: there is no consistency, and no standardised approach as to how the final outcome is calculated. Put simply, the information isn’t coming from a reliable, trusted, or consistent source.

Drawing on the example concerning the 9-mark question in the ‘Data accuracy’ section above, a question from a past paper, with an accredited mark scheme and published exemplar answers can be trusted. Someone with experience of marking multiple paper series, or an examiner, would further increase the reliability of the data being calculated.

Data validity

Validity: “the quality of being logically or factually sound; soundness or cogency.”

There’s a very thin line between reliability and validity. Depending on the source of the definition, both definitions can have the word “trust” or “trustworthy” associated with them. To help your understanding, here’s the process I used to produce data or calculate a final outcome.

When calculating the working grades for the pupils I taught, I would always replicate the method of calculation used by the exam boards. This replication of the exam board’s actual process increased the validity of the data I was creating. When you ask the question: “is this a valid method of calculating the working at grade?”, then it starts to make sense. Yes, it is valid – because it’s the same methodology used by the exam board. This is where the blurred line of this also being “trustworthy” creeps in.

We can also ask the same question in regard to the methods of assessment we use, for example: “is there validity in asking pupils to sit a mock exam?” The answer is yes, because it replicates the final assessment method AND the process that the pupil will need to go through, therefore preparing them for the final experience as well as providing useful data as to how they perform in relation to the mark scheme. When you ask questions of this nature to yourself, you start to see how the importance of data being valid is linked to whether the process you’re using to assess, track and monitor replicates or represents as closely as possible the final process that pupils will go through in their final exams and assessments. This ensures the highest level of validity of your data.

In closing…

Data is only meaningful if these three key points have been considered:

- Accuracy

- Reliability

- Validity

All are equally important, and that’s why they have been identified by ESTYN in their school inspection framework. The key message from this is that we need to consider the quality of the data being used.

When you consider the core components that ensure your data is meaningful, it makes perfect sense that we can’t be using data that isn’t accurate, isn’t reliable and has no validity. Ensuring the quality of our school data will have a huge impact on the one thing that we’re all trying to achieve: which is to improve outcomes for pupils being taught.

Brett Griffin

Founder and Chief Executive Officer

Comments